The AI Slop Loop How Algorithmic Misinformation and Recursive Hallucinations are Compromising Search Integrity

The rapid integration of generative artificial intelligence into global search engines has birthed a phenomenon now identified by industry experts as the AI Slop Loop, a recursive cycle where fabricated information is generated by AI, indexed by search engines, and subsequently cited by other AI models as verified fact. This systemic failure in information retrieval was recently highlighted by a series of high-profile incidents involving a non-existent Google algorithm update, exposing a critical vulnerability in the systems used by billions of people to navigate the internet. At the heart of the issue is the transition from traditional search—which directs users to source materials—to AI-driven "answers" that synthesize information without a robust mechanism for distinguishing between human-verified data and AI-generated hallucinations.

The Genesis of a Digital Hallucination

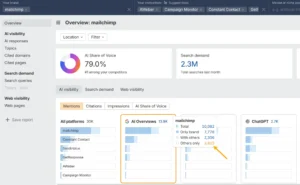

The vulnerability of modern search systems was laid bare when industry analysts began noticing detailed reports from AI platforms regarding a "September 2025 Perspective Core Algorithm Update." According to responses generated by Perplexity, an AI-native search engine, this supposed update from Google focused on "deeper expertise" and the "completion of the user journey." The responses were highly specific, providing nuances about ranking shifts and content quality standards.

However, the update never occurred. Industry experts, including prominent SEO consultant Lily Ray, noted several immediate red flags: Google has not used branded names for core updates in several years, and the term "Perspectives" already existed as a specific Search Engine Results Page (SERP) feature. Upon investigation, it was discovered that the AI had retrieved its information from several SEO agency blogs. These blogs had published AI-generated content that confidently fabricated the details of the non-existent update.

This incident illustrates a "game of telephone" in the digital age. An AI-generated article hallucinations a detail; that detail is scraped by automated content pipelines; multiple websites then regurgitate the same misinformation to capture "freshness" signals for search engines. Eventually, enough citations exist for Retrieval-Augmented Generation (RAG) systems, like those powering Perplexity or Google’s AI Overviews, to treat the fabrication as a consensus-backed fact.

Experimental Verification of the Slop Loop

To determine the ease with which search AI could be manipulated, investigators from the BBC and the New York Times conducted controlled tests. In one instance, a fictitious article was published claiming that Google’s January 2026 core update—another non-existent event—was "approved between slices of leftover pizza." Within 24 hours, Google’s AI Overviews began serving this fabricated detail to users.

Remarkably, the AI did not just repeat the lie; it contextualized it. The system linked the fabricated pizza detail to real historical data regarding Google’s 2024 struggles with pizza-related search queries, creating a narrative that appeared even more plausible to the average user. Similarly, Thomas Germaine of the BBC published a satirical claim on a low-traffic personal site asserting he was the world’s top tech journalist at eating hot dogs. Within a day, both Google’s Gemini and ChatGPT surfaced this "fact" in response to niche queries.

While Google defended these instances as "data voids"—scenarios where a lack of high-quality information leads to lower-quality AI responses—critics argue that the scale of the deployment makes such excuses insufficient. With AI Overviews reaching over two billion monthly active users as of mid-2025, the impact of these "voids" is no longer a marginal concern.

Quantifying the Scale of Misinformation

The implications of AI hallucinations are significant when viewed through the lens of global search volume. Data published in a New York Times study, "How Accurate Are Google’s A.I. Overviews?", suggested that the system is accurate approximately 91% of the time. While a 9% error rate might seem manageable in a laboratory setting, the real-world application is staggering.

Google processes an estimated 5 trillion searches annually. A 9% error rate implies that AI Overviews generate tens of millions of erroneous answers every hour. Furthermore, the study found that 56% of even the "accurate" responses were "ungrounded," meaning the sources cited by the AI did not actually support the claims being made. This suggests that more than half the time, a user attempting to verify an AI’s correct answer would find themselves looking at irrelevant or contradictory source material.

The study also noted a concerning trend: as models iterate, the grounding of information appears to be degrading. The percentage of ungrounded responses rose from 37% in Gemini 2 to 56% in Gemini 3, indicating that newer, more "creative" models may be sacrificing factual tethering for fluency and speed.

Official Responses and Technical Mitigations

The response from AI companies has been a mix of social media engagement and technical updates. When the fake "Perspective" update went viral on social media, Perplexity CEO Aravind Srinivas personally acknowledged the issue, tagging his technical team to investigate the failure in their RAG pipeline.

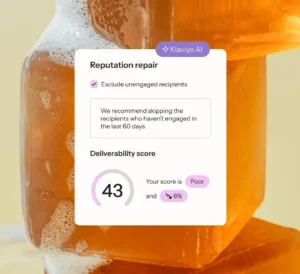

OpenAI has taken a different approach by introducing tiered models with varying levels of "thinking" time. The GPT-5.4 model, currently reserved for paying subscribers, utilizes a multi-round reasoning process designed to filter out low-quality or spammy information. According to OpenAI’s internal data, GPT-5.4 is 33% less likely to produce false individual claims compared to its predecessors. The model has also been observed to specifically prioritize high-authority domains—such as official documentation or recognized industry leaders—when performing web-based retrieval.

However, these improvements have created a "truth gap." While the most capable models are significantly more accurate, the vast majority of the user base remains on free tiers. Approximately 94% of ChatGPT’s 900 million weekly active users utilize the free version, which relies on faster, cheaper, and more hallucination-prone models.

The Economic Tiering of Accuracy

The current trajectory of the AI industry suggests a future where factual accuracy becomes a premium commodity. By paywalling the most rigorous reasoning models, companies are effectively creating a digital divide. The general public, interacting with free search tools and standard AI tiers, is exposed to the "Slop Loop" and recursive misinformation, while those with the means to pay receive a version of reality that has been more thoroughly fact-checked.

This shift has also fundamentally changed the user experience of searching for information. Traditionally, search engines acted as a directory, placing the burden of evaluation on the human user. AI search, by contrast, presents a synthesized conclusion with an authoritative tone. Because these systems rarely admit uncertainty, users find themselves in a paradoxical situation: the tool designed to save them time now requires them to perform double the work—first fact-checking the AI’s summary, and then conducting the original research they intended to do.

Broader Impact and Industry Implications

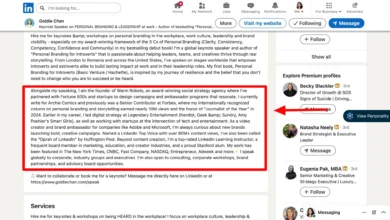

For marketers, publishers, and information professionals, the "AI Slop Loop" represents a significant threat to brand safety and authority. If a brand’s information is scraped and misinterpreted by an AI, that misinterpretation can become the "official narrative" in a matter of hours. Conversely, the ease with which fake news can be "laundered" through AI search results provides a powerful tool for bad actors seeking to spread disinformation.

The long-term concern remains the "copy of a copy" problem. As more AI-generated content is published to the web, the training sets for future LLMs become increasingly saturated with their own previous hallucinations. Without a drastic shift in how these models verify information—moving away from "frequency of mention" and toward "verifiability of source"—the integrity of the global knowledge base faces a period of unprecedented degradation.

As the industry moves forward, the burden of proof has shifted. In the era of the AI Slop Loop, the volume of information is no longer a proxy for its accuracy. Until search engines can effectively distinguish between human expertise and automated "slop," the responsibility for maintaining a factual reality remains, perhaps more than ever, in the hands of the human reader.