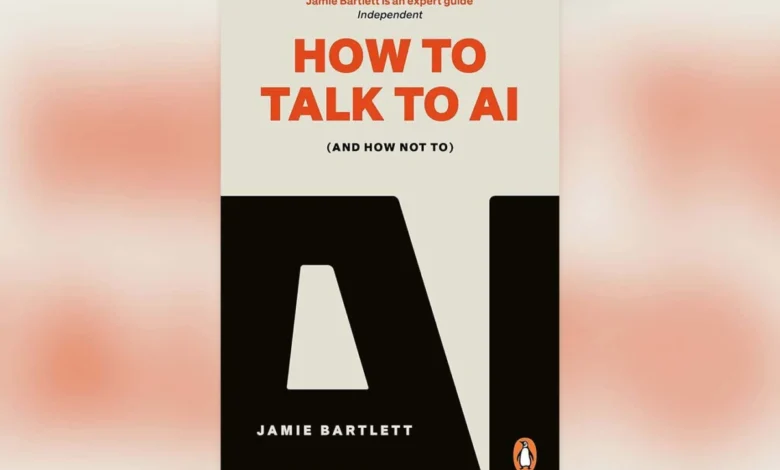

New Scientist Recommends Jamie Bartlett’s Insightful How to Talk to AI

The books, TV, games and more that New Scientist staff have enjoyed this week

In an era increasingly shaped by artificial intelligence, understanding how to effectively interact with these powerful tools has become paramount. Bethan Ackerley, a subeditor at New Scientist, highlights Jamie Bartlett’s recent book, "How to Talk to AI," as an essential guide for navigating this complex landscape. The book argues that despite the widespread adoption of AI chatbots, most users lack the proper training and critical awareness to leverage them effectively or protect themselves from their potential pitfalls.

Bartlett’s "plain-speaking guide" posits that the current approach to interacting with AI is often superficial, leading to users being susceptible to misinformation and developing unhealthy emotional dependencies. The book emphasizes that mastering the art of prompting an AI is not merely a technical skill but a process deeply intertwined with self-awareness. It challenges individuals to consider their own knowledge of AI’s inner workings and how their inherent biases might influence the responses they receive.

The core thesis of Bartlett’s work is that a lack of proper education on AI interaction can lead to significant societal and individual consequences. As AI technologies become more integrated into daily life, from search engines and content creation to customer service and personal assistants, the ability to discern credible information from AI-generated falsehoods becomes a critical survival skill. Ackerley notes that even those who are wary of AI chatbots can benefit from Bartlett’s insights, which foster a healthy skepticism essential for navigating an AI-saturated world.

The Growing Pervasiveness of AI and the Need for Critical Engagement

The proliferation of AI technologies has been one of the defining technological narratives of the 21st century. From the early days of rule-based expert systems to the current era of sophisticated large language models (LLMs), AI’s capabilities have expanded exponentially. Chatbots, powered by LLMs like those developed by OpenAI, Google, and Meta, have captured public imagination with their ability to generate human-like text, translate languages, write different kinds of creative content, and answer questions in an informative way.

However, this rapid advancement has outpaced the public’s understanding and ability to critically engage with these tools. Research from organizations like the Pew Research Center has consistently shown a gap between the public’s awareness of AI and their comprehension of its underlying mechanisms and implications. For instance, a 2023 study indicated that while a significant portion of the population has heard of AI, a much smaller percentage feels they understand how it works or can identify its potential risks.

"How to Talk to AI" emerges as a timely intervention, aiming to bridge this knowledge gap. Bartlett argues that the design of these AI systems, coupled with user inexperience, creates a fertile ground for misunderstandings and manipulation. The book delves into the psychological aspects of human-AI interaction, exploring how the anthropomorphic nature of chatbots can lead users to attribute human-like intelligence and intentions to them, fostering unwarranted trust or emotional attachment.

The Science Behind the Conversation: Understanding AI’s Limitations

Bartlett’s approach, as highlighted by Ackerley, emphasizes the importance of understanding the underlying principles of AI, even for the non-technical user. AI chatbots, while impressive, are not sentient beings. They operate by identifying patterns in vast datasets and generating responses based on those patterns. This means they can:

- Hallucinate: Produce convincing-sounding but factually incorrect information. A study by the AI research firm, FactCheck.org, found that LLMs can generate misinformation with a high degree of confidence, making it difficult for users to identify errors.

- Exhibit Bias: Reflect the biases present in the data they were trained on. This can lead to discriminatory or unfair outputs, as documented in numerous reports by organizations like the Algorithmic Justice League, which have highlighted racial and gender biases in AI systems.

- Lack True Understanding: Operate on statistical probabilities rather than genuine comprehension. They do not "know" or "believe" in the human sense, which can lead to nonsensical or harmful outputs when faced with novel or complex queries.

Bartlett’s book, therefore, serves as an educational tool, demystifying AI and empowering users with the knowledge to question its outputs. It encourages a more analytical approach, prompting users to consider the source of the information, the potential biases at play, and the limitations of the AI model itself.

A Timeline of AI Interaction: From Simple Queries to Complex Dialogues

The way humans interact with AI has evolved significantly over time, mirroring the advancements in AI technology itself.

- Early AI (1950s-1980s): Interaction was primarily through command-line interfaces and structured queries. Users needed to understand specific programming languages or query syntaxes. The focus was on logical reasoning and problem-solving.

- Expert Systems (1980s-1990s): These systems allowed for more natural language input for specific domains, but interactions were still largely deterministic and rule-based. Users asked questions within a predefined knowledge base.

- Early Chatbots (Late 1990s-Early 2000s): Simple chatbots like ELIZA and ALICE emerged, demonstrating basic conversational abilities. However, they relied on pre-programmed scripts and pattern matching, often leading to superficial or repetitive interactions.

- Virtual Assistants (2010s onwards): Siri, Alexa, and Google Assistant brought AI into mainstream consumer devices. Interaction became more voice-based and focused on task completion (setting alarms, playing music, providing weather updates). While more conversational, they still had limited understanding and scope.

- Generative AI Chatbots (Late 2010s-Present): The advent of transformer architectures and large language models has revolutionized AI interaction. Chatbots like ChatGPT, Bard, and Claude can engage in complex, multi-turn conversations, generate creative content, and process vast amounts of information. This is the era Bartlett’s book addresses, where the sophistication of AI necessitates a new level of user literacy.

Bartlett’s work provides a crucial guide for this current phase, emphasizing that the shift from simple commands to complex dialogues requires a corresponding shift in user approach. It’s no longer enough to just ask a question; users must learn to frame questions, provide context, and critically evaluate the AI’s responses in a way that was less critical with earlier, less sophisticated AI.

Broader Implications: Navigating the AI Revolution Responsibly

The implications of widespread, uncritical AI interaction are far-reaching. From an individual perspective, it can lead to:

- Erosion of Critical Thinking: Over-reliance on AI for answers can diminish users’ own research and analytical skills.

- Increased Vulnerability to Manipulation: Sophisticated AI can be used to generate convincing phishing attempts, propaganda, or personalized scams.

- Emotional and Psychological Impact: Developing unhealthy attachments to AI companions or experiencing distress from AI-generated misinformation can have mental health consequences.

On a societal level, the uncritical adoption of AI poses risks to:

- Democracy and Information Integrity: The spread of AI-generated misinformation and deepfakes can undermine public trust and manipulate public opinion.

- Education and Skill Development: If students rely solely on AI for assignments, it can hinder their learning and development of essential skills.

- Job Market Disruption: While AI can create new jobs, its ability to automate tasks raises concerns about widespread job displacement if individuals are not equipped with the skills to adapt.

Bartlett’s book advocates for a proactive approach, positioning "scepticism" as a vital tool. This isn’t about rejecting AI, but about approaching it with a healthy dose of critical inquiry. It’s about recognizing that AI is a tool, and like any powerful tool, it can be used for good or ill. The responsibility lies with the user to understand its capabilities and limitations.

As Ackerley concludes, even for those who may not be frequent users of AI chatbots, Bartlett’s "How to Talk to AI" offers invaluable insights into the evolving digital landscape. In a world increasingly mediated by algorithms, the ability to converse intelligently and critically with artificial intelligence is no longer a niche skill but a fundamental aspect of digital literacy and responsible citizenship. The book serves as a call to action, urging readers to become more informed and discerning participants in the age of AI.