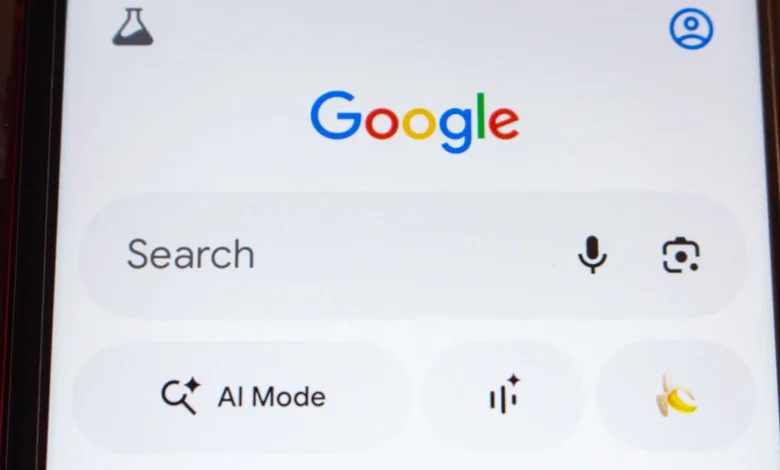

Google AI Mode in Chrome Gets Side-by-Side Browsing

The Evolution of AI Integration in Google Chrome

The introduction of side-by-side browsing and enhanced contextual search is the culmination of a rapid development cycle that began with the launch of Search Generative Experience (SGE) in 2023. Initially, AI interactions within Chrome were siloed—users had to navigate to specific Google Search pages or open separate chat interfaces to engage with generative models. The first major step toward integration occurred when Google brought AI Mode to the Chrome address bar, or "omnibox," allowing users to trigger AI queries without leaving their current tab.

The current update addresses a persistent friction point in digital research: the "tab-switching" tax. Previously, when a user engaged with AI Mode and clicked a suggested link or a source, the browser would navigate away from the AI interface to the new URL. This forced users to manually toggle between the AI’s summary and the source material to verify facts or find deeper details. With the new side-by-side rendering, clicking a link within the AI Mode panel now opens the destination webpage in a split-screen view. This allows the AI interface to remain persistent on one side of the screen while the live website occupies the other, facilitating a seamless flow of information and enabling users to ask follow-up questions about the specific content they are viewing in real-time.

Enhancing Context Through the New "Plus" Menu

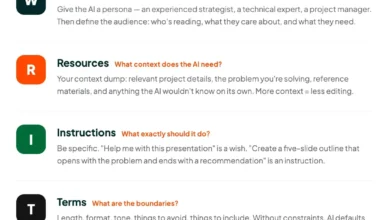

Perhaps the most technically significant aspect of this update is the introduction of the "plus" menu, a feature designed to solve the problem of "context fragmentation." In traditional search, the engine only knows what the user types into the search box. In the new AI-enhanced Chrome, the engine can now "see" what the user is working on across multiple formats.

The plus menu, accessible from both the New Tab page and within the AI Mode interface, allows users to explicitly feed context to the AI. Users can now select currently open tabs, uploaded images, or PDF documents and attach them to a single query. For example, a student researching a thesis could select three open academic papers (tabs), a chart (image), and a syllabus (PDF), and ask the AI to "synthesize these sources into an outline." This multi-modal capability moves Google Chrome closer to the functionality of dedicated AI productivity tools like NotebookLM, but within the ubiquitous environment of the browser.

Furthermore, Google has decentralized its creative tools. Features such as "Canvas" (for document generation) and image creation tools, which were previously nested deep within AI Mode menus, are now available on any Chrome surface that features the plus menu. This suggests a future where AI-driven content creation is a standard utility of the browser, much like the "copy" and "paste" functions.

Chronology of Google’s Browser-Based AI Strategy

To understand the weight of this update, it is necessary to look at the timeline of Google’s AI implementation within its ecosystem:

- May 2023: Google introduces Search Generative Experience (SGE) at Google I/O, testing AI-powered summaries at the top of search results.

- Late 2023: Google begins experimenting with "Help me write" and other generative features directly within Chrome’s text fields.

- February 2024: Rebranding of Bard to Gemini and the introduction of the Gemini Pro 1.5 model, which features a massive context window capable of processing hours of video or thousands of lines of code.

- August 2024: Google integrates AI Mode into the Chrome omnibox, allowing users to type "@gemini" or use specific shortcuts to launch AI queries.

- Current Update: Launch of side-by-side browsing and multi-modal context menus in the U.S. market, signaling a move toward a "native" AI experience.

Market Context and Competitive Pressure

Google’s aggressive push into browser-integrated AI is not happening in a vacuum. The company faces stiff competition from Microsoft Edge, which has aggressively integrated its Copilot AI (powered by OpenAI’s GPT-4) into a persistent sidebar. Edge’s sidebar has long offered features similar to side-by-side browsing, giving Microsoft a temporary edge in productivity workflows.

Additionally, "challenger" browsers like Arc by The Browser Company have gained a cult following by rethinking the browser UI entirely around AI-driven organization and "Live Folders." By bringing side-by-side browsing to Chrome, Google is defending its dominant market share—which currently sits at approximately 65% globally—by ensuring that users do not have to switch to Edge or Arc to access modern, AI-assisted navigation.

Official Perspectives and User Experience Goals

In their joint announcement, Robby Stein and Mike Torres emphasized that the goal is to make AI feel like a natural extension of the user’s intent rather than a separate destination. Stein noted that the update is specifically designed for complex tasks that require synthesis, such as planning travel, comparing products, or conducting deep academic research.

"We want to make it as easy as possible to bring the information you’re already looking at into your AI-powered conversations," the executives stated. This focus on "information you’re already looking at" highlights a shift in search philosophy: from "finding" information to "interacting" with it.

Technical and Privacy Considerations

The ability to add open tabs and files as context raises important questions regarding data privacy and local processing. While Google has not detailed the exact local-versus-cloud split for these specific features, it is widely understood that Chrome is increasingly utilizing "on-device" AI for smaller tasks while offloading complex reasoning to Gemini’s cloud-based models.

When a user adds a tab or a PDF as context, the browser must parse that content to provide an accurate response. Google maintains that this data is used to generate the specific response requested by the user and is subject to the privacy protections outlined in their generative AI terms of service. However, for enterprise users and privacy-conscious individuals, the "context-aware" nature of the browser will likely remain a point of scrutiny, as the line between a "tool that helps" and a "tool that watches" becomes increasingly thin.

Implications for the Open Web and SEO

The introduction of side-by-side browsing has profound implications for digital publishers and the broader SEO (Search Engine Optimization) landscape. For decades, the goal of a search engine was to send a user to a website and then "exit" the experience. With side-by-side browsing, Google keeps the user within its own interface even after they have arrived at a publisher’s site.

This could lead to a decrease in "dwell time" metrics as traditionally measured, as users may spend more time interacting with the AI panel than scrolling through the website’s full content. Conversely, it could improve the quality of traffic; users who stay on a page while asking an AI for clarification are arguably more engaged with the material. Publishers may need to adapt by ensuring their content is easily "parsable" by AI models to ensure that the summaries provided in the side-by-side panel are accurate and encourage further exploration of the source.

Looking Ahead: The Global Rollout

Currently, these features are available to Chrome users on desktop platforms within the United States. Google has confirmed that a global rollout is planned, with other countries and languages to follow in the coming months. This phased approach allows Google to monitor the impact on server load and refine the user interface based on initial feedback.

As AI Mode becomes more deeply embedded, the industry anticipates the next logical step: proactive AI. Future iterations of Chrome may not wait for a user to click a "plus" menu but might instead suggest relevant tabs or files to include as context based on the user’s current activity. For now, the addition of side-by-side browsing and contextual inputs marks a definitive end to the era of the "static" browser, ushering in a period where the web is something we don’t just view, but something we converse with in real-time.