The Evolution of AI Search Crawling Trends and the Surge in LLM-Driven Referral Traffic for Local Businesses

The landscape of the open web is undergoing a fundamental transformation as artificial intelligence (AI) agents and Large Language Models (LLMs) shift from passive observers to active participants in the digital ecosystem. A comprehensive new analysis of 858,457 websites hosted on the Duda platform has provided an unprecedented look into how AI crawlers interact with web content at scale. This data not only illuminates the rapid growth of AI-driven crawling activity but also offers a strategic roadmap for Search Engine Optimization (SEO) professionals and small-to-medium-sized businesses (SMBs) looking to capture traffic from the burgeoning "Answer Engine" economy. As traditional search engines pivot toward generative responses, the way content is discovered, indexed, and surfaced is being redefined by a handful of dominant AI players, led primarily by OpenAI.

The scale of AI crawling is no longer a niche phenomenon; it has reached a critical mass that impacts a majority of the web. According to the Duda study, more than half of the nearly 860,000 sites analyzed received at least one visit from an AI crawler in a single month. This activity resulted in tens of millions of requests, signaling that AI systems are now a permanent and pervasive fixture of web infrastructure. Crucially, the research indicates that this crawling is not evenly distributed across all platforms but is heavily concentrated, reflecting the current market hegemony in the AI sector. OpenAI’s crawlers are responsible for the vast majority of these requests, aligning with ChatGPT’s status as the primary tool for information retrieval and real-time query resolution.

The Shift from Indexing to Real-Time Grounding

Historically, web crawling was a process of "indexing"—gathering information to store in a massive database for later retrieval by a search engine. However, the Duda analysis reveals a significant shift in intent. AI crawlers are increasingly fetching content to "ground" answers in real time. This means that instead of merely building a library of the web, these bots are visiting sites in response to specific user queries to ensure that the AI-generated response is accurate, current, and cited.

This trend toward real-time retrieval is almost entirely driven by ChatGPT. The data shows that OpenAI’s activity is uniquely tied to user-initiated sessions, where the AI needs to verify a fact or pull a specific business detail to satisfy a prompt. This changes the fundamental nature of web traffic. While traditional SEO focused on ranking for keywords to appear in a list of links, the new paradigm of Answer Engine Optimization (AEO) requires content to be formatted and verified in a way that an AI agent can confidently extract it to build a comprehensive answer. While Google-Agent, a newer crawler, is expected to shift these dynamics as it integrates more deeply with Google’s Search Generative Experience (SGE), the current market remains dominated by the OpenAI ecosystem.

Year-Over-Year Growth and Referral Patterns

The growth of referral traffic from LLMs has been explosive over the past twelve months. Unlike traditional search traffic, which has seen plateauing growth in many sectors, AI-driven referrals are increasing across the board. The study highlights that this growth is not marginal; it is becoming a significant source of discovery for businesses. Sites that are successfully integrated into the AI ecosystem are seeing a sharp increase in human visitors who are directed to their pages via links embedded within AI responses.

A critical finding of the research is the correlation between AI crawling activity and actual human traffic. The data shows that sites allowing AI systems to crawl them consistently exhibit stronger engagement metrics. On average, sites that were crawled by AI systems received 527.7 sessions, compared to just 164.9 sessions for sites that were not crawled. This represents a staggering 320% difference in traffic. While the researchers note that this does not necessarily establish direct causation—AI systems are more likely to crawl sites that already have high authority and human demand—it does suggest a symbiotic relationship. AI systems act as a force multiplier for sites that are already performing well, reinforcing their visibility and authority in a virtuous cycle.

Factors Correlating with Increased AI Crawling

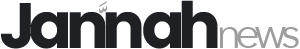

The analysis identified three primary signals that correlate with high AI crawler activity: external integrations, structured site features, and content depth. These factors serve as "trust signals" that help AI agents verify the legitimacy and relevance of a business.

-

External Integrations: Sites that are connected to third-party platforms such as Google Business Profile, Yelp, and various review systems are crawled significantly more often. AI systems use these integrations to validate that a business is real and operational. If an AI agent sees consistent information across multiple authoritative platforms, it is more likely to return to the source site to fetch detailed information for a user query.

-

Structured Business Data: The use of machine-readable data, particularly Local Business Schema, is one of the strongest predictors of AI crawl frequency. The study found a direct linear relationship between the completeness of schema fields—such as business name, address, phone number (NAP), operating hours, and social profiles—and the rate at which AI bots visited the site. Sites with "complete" schema profiles were prioritized by crawlers, as the information is easy to parse and verify without the risk of "hallucination" by the AI.

-

Content Depth and Volume: AI systems are data-hungry. The research found that sites with a larger volume of usable content—blogs, service pages, and detailed product descriptions—receive far more crawler visits. This suggests that AI agents treat these sites as "knowledge hubs." When a site offers a depth of information, it provides more opportunities for the AI to retrieve and reuse that data in various contexts, leading to more frequent re-indexing and retrieval requests.

Market Concentration and the Competitive Landscape

The concentration of crawling activity within a few major players has significant implications for the future of the open web. OpenAI’s dominance suggests that for most businesses, optimizing for ChatGPT is currently the most effective way to capture AI referral traffic. Anthropic’s Claude follows at a much smaller share, indicating a different usage pattern—perhaps more focused on creative or technical analysis rather than local business discovery.

However, the landscape is poised for a major shift with the full rollout of Google’s "Google-Agent." As Google pivots its search engine toward a more agentic model, it will likely leverage its existing dominance in the search market to challenge OpenAI’s lead in real-time retrieval. For businesses, this means that while OpenAI is the current priority, maintaining compatibility with Google’s evolving standards remains essential. The emergence of "OpenClaw" and other open-source crawling initiatives may also play a role in democratizing access to web data, though they currently represent a minimal portion of total activity.

The Strategic Shift: From Discovery to Verification

For marketers and business owners, the takeaway from the Duda analysis is a shift in strategy. In the traditional search era, the goal was to "get discovered." In the AI era, the goal is to "get verified." AI systems are not designed to discover weak or inactive sites and elevate them; rather, they are designed to find the most reliable information to answer a user’s question.

The data suggests that AI agents follow human demand. They return to sites that already attract human visitors and possess strong external validations. Therefore, the focus for businesses should not be on "tricking" an AI crawler into visiting, but on building a robust digital footprint that includes:

- High-quality, deep content that addresses user intent.

- Complete and accurate structured data (Schema) to facilitate machine reading.

- Strong presence on third-party validation platforms (reviews and directories).

Broader Implications for the Future of Search

The findings of this study point toward a future where the distinction between "search" and "answers" continues to blur. As AI crawlers move away from simple indexing and toward real-time grounding, the "click-through rate" (CTR) of the future may depend less on being the first link in a list and more on being the cited source in a generated paragraph.

This evolution poses challenges for the traditional ad-supported model of the web. If AI agents provide the answer directly on the search results page, the incentive for users to visit the source site may diminish. However, the Duda data offers a counter-narrative: sites that are highly visible to AI agents are actually seeing more human traffic, not less. This suggests that AI is not yet replacing the need for source material but is instead acting as a sophisticated filter that directs high-intent users to the most authoritative and well-structured destinations.

In conclusion, the era of AI-scale crawling has arrived. With 59% of sites already being touched by these systems and a 320% traffic advantage for those that are successfully crawled, the stakes for AEO have never been higher. By focusing on structured data, content depth, and external validation, businesses can ensure they are not just visible to the AI agents of today, but are positioned to thrive in the agent-driven web of tomorrow. The research from Duda serves as a definitive marker of this transition, proving that the integration of AI into the fabric of the internet is no longer a future projection—it is a present reality.